Making technology work how you expect it to, and keep it working that way can be difficult at times. Changes in configuration or software updates can put services into a broken or half-broken state, and we had the latter happen to us.

In the spirit of transparency, we are writing about the painful discovery that many of our Tor exit relays (at least 1/3) have been broken for (at the very least) weeks, and possibly months without our knowing.

I’d like to thank the team at Tor Project for letting us know about the issue, and how to reproduce it, which ultimately led to it being discovered and fixed.

Root cause

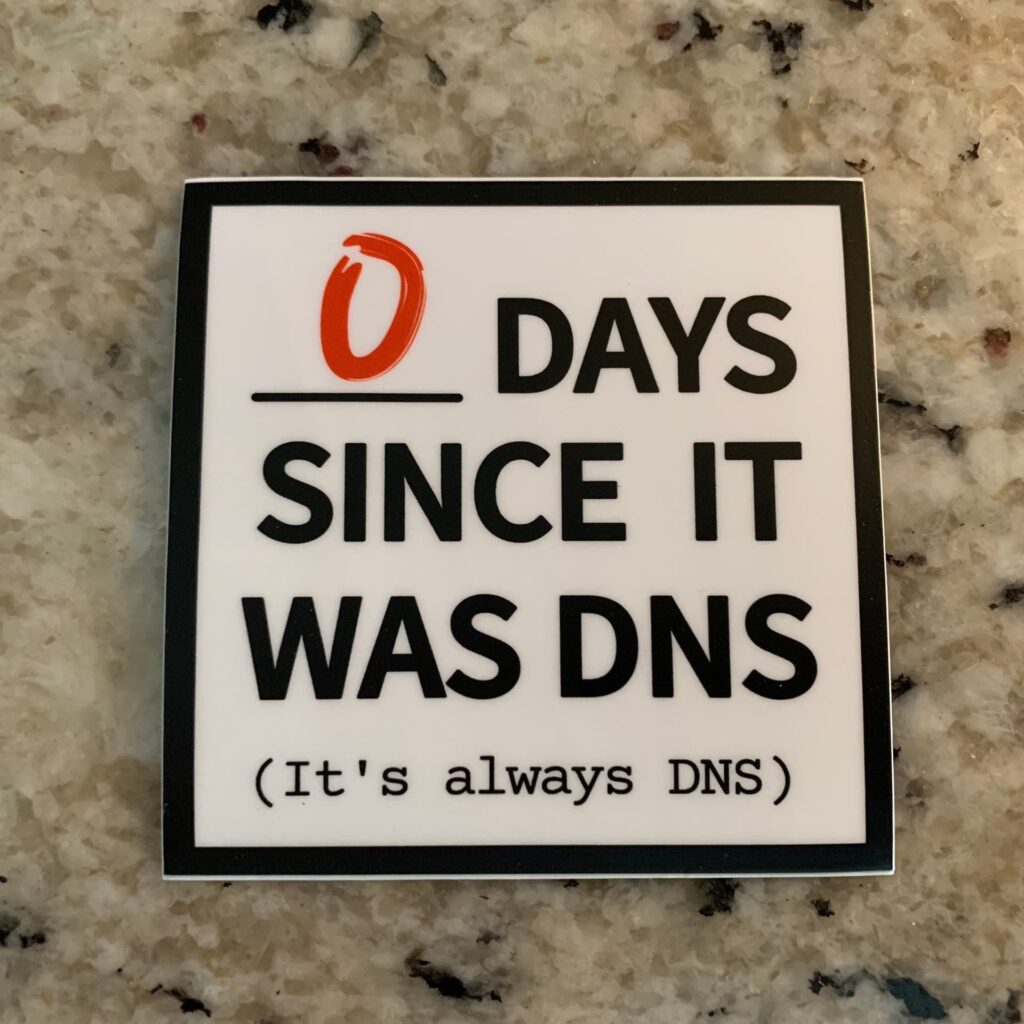

Based on the image, you might suspect what the issue was.

It was DNS. Specifically, it was Tailscale’s MagicDNS feature. DNS queries were not getting resolved for some reason that is unknown to us. This means that anyone who ended up connecting to our Tor exit relays failed to connect to nearly every domain/subdomain by failing to resolve hostnames. Connecting to IPs worked just fine.

Before we go on blaming Tailscale, I want to state that we don’t know why MagicDNS failed in the way we observed, we just know that it did. Ultimately, disabling MagicDNS on our exit relays resolved the issue entirely. When we enabled it, and tested again, it failed. As a result, we’ve left it off.

Technical analysis

We knew the symptom was that DNS resolution seemed to fail, which was noted by the nice people at Tor.

We started by attempting to reproduce the issue ourselves. This required pinning our Tor instance/daemon on our local computer to a specific exit relay’s fingerprint that was exhibiting this strange behavior. For example, by modifying our Tor daemon’s torrc file and adding the below line to it, we could force our local Tor daemon to exit all traffic on that relay.

ExitNodes F34EE673122518873E717C128E35A389B72C7837 This fingerprint corresponds to our UnredactedSnowden relay.

We then pointed one of our browsers to use the local SOCKS proxy the Tor daemon listens on (127.0.0.1 port 9050) to send traffic through Tor.

When attempting to connect to any website, it failed, but the reason was unclear and did not appear to display an error related to a DNS resolution issue.

As DNS was still the suspect here, the easiest thing to do was to SSH into that exit relay and run a tcpdump to capture all inbound and outbound packets that used TCP or UDP port 53, such as the one below.

tcpdump -i any -n port 53Once we did that, we discovered that nearly all DNS queries originated from Tor seemingly went out to the 100.100.100.100 MagicDNS IP, but nothing was returned on most queries. We knew at this moment, that it was indeed a DNS resolution problem.

An anonymized example of what we saw:

17:11:52.164204 tailscale0 Out IP 100.69.x.x.22065 > 100.100.100.100.53: 40707+ A? domain.com. (45)

17:11:52.172049 tailscale0 Out IP 100.69.x.x.22065 > 100.100.100.100.53: 33552+ A? domain.com. (33)

17:11:52.203409 tailscale0 In IP 100.100.100.100.53 > 100.69.x.x.22065: 47343 NXDomain 0/1/0 (119)

17:11:52.321235 tailscale0 Out IP 100.69.x.x.22065 > 100.100.100.100.53: 45366+ A? domain.com. (28)

17:11:52.321271 tailscale0 Out IP 100.69.x.x.22065 > 100.100.100.100.53: 16617+ A? domain.com. (35)

17:11:52.321303 tailscale0 Out IP 100.69.x.x.22065 > 100.100.100.100.53: 39612+ A? domain.com. (29)

17:11:52.352491 tailscale0 Out IP 100.69.x.x.22065 > 100.100.100.100.53: 63111+ A? domain.com. (34)

17:11:52.383332 tailscale0 Out IP 100.69.x.x.22065 > 100.100.100.100.53: 15513+ A? domain.com. (35)

17:11:52.501714 tailscale0 Out IP 100.69.x.x.22065 > 100.100.100.100.53: 16308+ A? domain.com. (29)

17:11:52.532238 tailscale0 Out IP 100.69.x.x.22065 > 100.100.100.100.53: 7697+ A? domain.com. (40)

17:11:52.540674 tailscale0 In IP 100.100.100.100.53 > 100.69.x.x.22065: 22472 0/1/0 (89)

17:11:52.544683 tailscale0 Out IP 100.69.x.x.22065 > 100.100.100.100.53: 8052+ A? domain.com. (47)We tried several things;

- Switching the MagicDNS nameservers to other ones & restarting Tor.

- Rebooting the exit relay we were testing with to see if it was a strange Tailscale daemon/interface/routing issue.

- Using

digvia CLI on the test relay which queried the MagicDNS IP (100.100.100.100) which worked without issue.

We were at a loss, and couldn’t figure out what was happening. We then decided to disable MagicDNS on the test relay to see what would happen. It worked, DNS queries started flowing and getting resolved responses via the same nameservers directly.

We subsequently disabled MagicDNS on the rest of the exit relays with an adhoc Ansible shell command.

Our conclusion

The problem appeared to be with the abstraction that MagicDNS does, and queries originating from Tor did not appear to work 99%+ of the time when the feature was enabled. However, queries from dig via CLI appeared to always work. We suspect that MagicDNS fails in some sort of way when too many queries are directed at its 100.100.100.100 IP which is seemingly routed out the tailscale0 interface (& subsequently onto the physical interface). However, this doesn’t make complete sense, as we would expect queries from dig to fail as well.

We may never know what happened exactly, and we don’t want to leave it in a broken state long enough to figure it out. At this point, it’s safe to say that we are leaving MagicDNS disabled on our Tor exit relays for the foreseeable future.

Shortly after resolving the issue, our Tor exit relay traffic rate shot up beyond previously normal levels and hit our full capacity (as of writing this).

In the near future, we will explore running our own local DNS resolver on each exit relay, which we’ve done in the past – but had to move away from due to an overload of bogus queries originated from Tor which also resulted in DNS resolution failures. DNS over HTTPS (DoH) or DNS over TLS (DoT) are also great options we may explore further.

We hope you found this interesting and insightful. If you enjoy what we do, please consider making a donation. Unredacted is a non-profit organization that provides free and open services that help people evade censorship and protect their right to privacy.